The OpenEdge DBA Files

When the Crowd Strikes!

ProTop saw the issues first and sent out warnings to our customers.

Introducing the new ProTop Portal!

The long-extant ProTop Portal has gotten a facelift. It has been long enough! We've added many new features and user experience improvements across the board.

ProTop isn't just for DBAs!

It's for developers too! Read on to see how ProTop can inform your development lifecycle by helping you uncover issues before they reach production. Oh, and it's free!

Are you experiencing memory issues on Linux?

Has your OpenEdge Database Application fallen victim to the Out Of Memory (OOM) Killer? Are you hunting for memory leaks? Use ProTop's "dirty memory" script on Linux to see which processes hold more private memory than others.

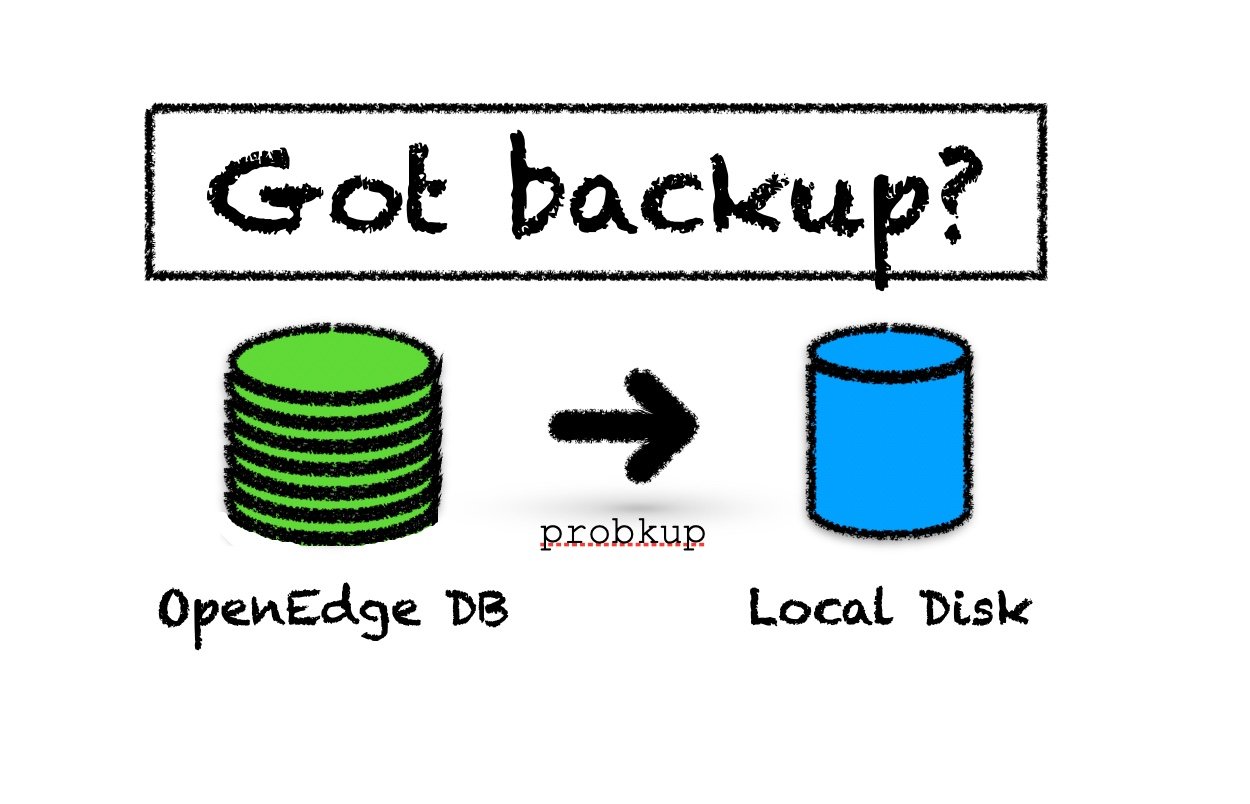

OpenEdge database backup best practices

You might imagine there are many ways to back up an OpenEdge database. But not all of them are created equal. And if you haven't tested it, it doesn't count!

Cardinal rule #1 - Use probkup

- Do it daily during a lull in user activity - no reason not to. With the ability to back up online, business is not interrupted.

- Buy more disks if you have to, but back up to a local drive, uncompressed. This way, the backup is ready to restore (and roll AI/archives against), reducing the time you need to return to operations (RTO).

- You must use it if you use OE Replication, as it's the only way to reseed a replication target.

- Watch out! It might slow down your application for data stored in type I storage areas (What? Still using Type I storage areas? tsk tsk).

- Disk reads will jump while the backup reads blocks not already in memory, dropping your buffer hit rate. But you'll likely not feel it if you stick to the first bullet above.

Cardinal rule #2 - Back up ALL of the files needed to effect a restore

- Execute a prostrct list as a part of your backup routine and include the structure file in the files you back up.

- Include the keystore if TDE is enabled in the database.

Cardinal rule #3 - Don't rely on incremental backups

- They can take just as long and create a similar-sized backup.

- They add unnecessary complexity (see the exception below).

- You still need a full backup before you can take incremental backups.

- If you do not perform full backups regularly, you will use increasing amounts of backup media for incremental backups and expanding recovery time due to needing to restore multiple incremental backups in addition to the full backup.

- EXCEPTION: If an application database undergoes an extended period of very high write volume, taking periodic incremental backups may be less I/O intensive than writing to lots of large AI extents. Test it!

- If you are using after-imaging (you are, right?), there is no reason for incremental backups (except for the exception above!).

probkup switches to use

- -com - reduces the number of IO ops needed to create the backup file and reduces the backup file size (may decrease the speed of backup on high-speed systems, so test!).

- -verbose - to know where you are.

- On 11.3.x or 11.4.0 - use "bibackup all" to overcome the prorest bug (fixed in 11.5.0) that, if not used, will render the backup useless!

Cardinal rule #4 - Test it, regularly

Document and exercise your restore process (prorest) regularly to verify it (still) works!

The Cure for Chaos: Is DIY Worth the Time, Effort and Risk?

Now more than ever, the marketplace is flooded with tools that can support, automate, and/or analyze practically any aspect of your application lifecycle. Many of these tools are widely adopted and open source (read: common and free). Does common mean best-of-breed? Is stringing together free (often unsupported) open source solutions a prudent strategy for managing enterprise applications?

Should you DIY?

In this blog, Randall K. Harp, Roundtable Software Engineer, and Jaclyn Barnard, Roundtable Director of Business Development, highlight several key considerations that can help you decide if a do-it-yourself strategy is the best bet for your team.

Diet for a fat client

Gone are the days when a client can snack on fast food records served up directly from the McServer. Reading records one at a time over a network connection is like consuming empty calories. It’s not a good situation where the client is so fat that it can’t move or function quickly.

New Programmer Series: Setting up your IDE

Today’s blog post comes from Jaison Antoniazzi, a 20+ year OpenEdge expert from Brazil and author of two books in Portuguese on OpenEdge.

Jaison has embarked on an ambitious project for 2022: 365 days of Progress OpenEdge development tips, which he is sharing on LinkedIn!